Detection and Tracking

Camera sensor configuration, object and lane detection, tracking and sensor

fusion

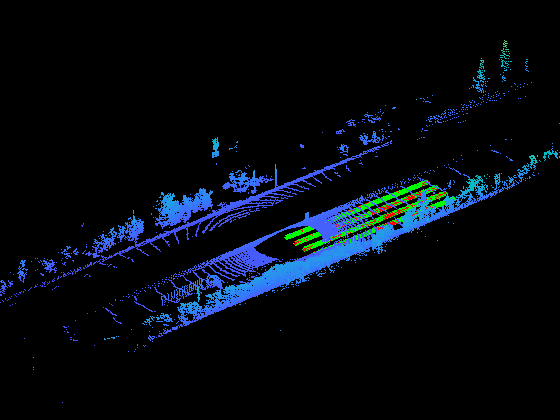

Automated Driving Toolbox™ perception algorithms use data from cameras and lidar scans to enhance situational awareness of the vehicle. Using these perception algorithms, you can detect and track objects of interest and locate them in a driving scenario. You can leverage advanced deep learning and machine learning techniques for object detection and fuse those detection measurements from various sensors to track objects in the environment. You can build on these algorithms and create autonomous driving applications such as automatic braking and steering.

Categories

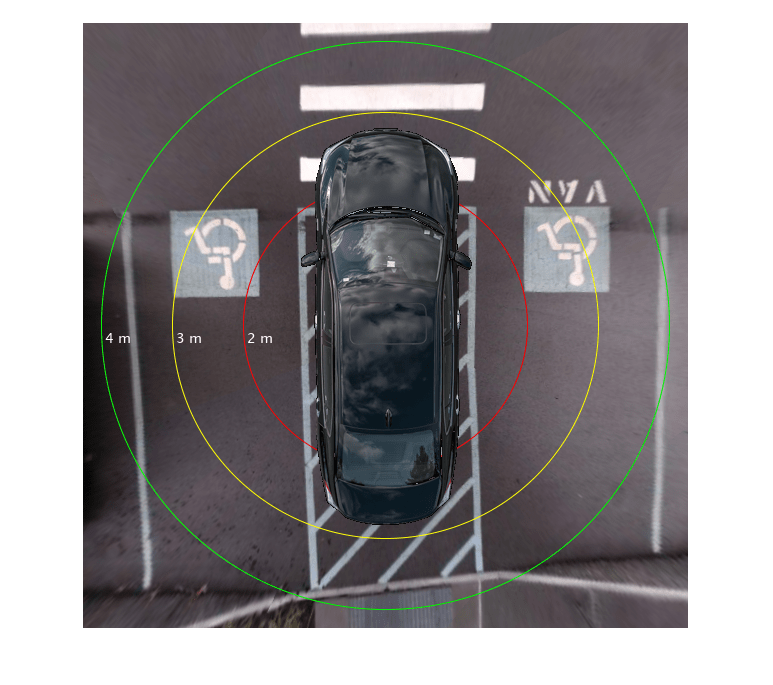

- Camera Sensor Configuration

Monocular camera sensor calibration, image-to-vehicle coordinate system transforms, bird’s-eye-view image transforms

- Object and Lane Detection

Lane boundary, pedestrian, vehicle, and other object detections using machine learning and deep learning

- Tracking and Sensor Fusion

Object tracking and multisensor fusion, bird’s-eye plot of detections and object tracks